AI-Powered Age Assurance

Meta's version of the GUARD Act

Key Sources:

https://about.fb.com/news/2026/05/ai-age-assurance-teens/

Analysis by Julie Barrett: https://x.com/juliecbarrett

In a previous article we provided a detailed walk-through and analysis of the GUARD Act currently working its way through Congress. You can read that full article here. The legislation basically defines AI chatbots and companions as inherently dangerous to minors due to their deceptive nature, mimicry of human personality, claims to be authoritative, their transformative effect on users, and because of the content that can be generated or accessed via them. All of this combined presents to minors a highly addictive platform that is radicalizing our youth. The GUARD Act codifies this legally, setting for us a new moral concern about the nature of this technology.

It should be noted that this harm and the use by minors is the fruit of how the creators of these AI services want their technology to be used. The most commonly recommended use of xAI’s Grok and OpenAI’s ChatGPT includes as a companion, personal advisor, source of truth, and generator of images and videos that fulfill people’s fantasies. The marketing of these use cases is very aggressive, and there are plenty of reports documenting this “normal” use and the widespread harm it is causing.

Yet Congress has found that this designed and intended use of AI chatbots is leading minors to commit acts of self-harm, up to and including suicide. The problem is so bad that legislation prohibiting minors from being able to use AI chatbots at all has unanimous support in Congress so far. In fact, as we reported, the Congressional lobbyists behind this legislation as so concerned about it that they are willing to bypass the normal spheres of authority and influence for minors and appeal directly to the last resort - Federal law.

The problem, as we outlined in our past article is not only found in the content available via AI, but also in the very form and structure of how AI has been presented to the common user for common use. We cover the deceptive nature and its dangers in numerous articles, most recently here, and also the concern of its transformative nature here.

While the GUARD Act legislation provides technology companies with implementation guidance, defining how they should design and integrate age verification and access control capabilities to prevent minor access to AI, it also leaves room for the technology companies to define their own way, and it also contextualizes the legal “offense” as bound to certain types of content and conduct accessible by teens via AI.

In our analysis we warned that if Congress does not explicitly control how the law must be implemented, Big Tech will transform the new mandate to their advantage in a way that increases their power rather than diminishes their influence. Remember that it is Big Tech who first produced, made available, and marketed this technology to those whom it is directly harming to such a degree that Congress feels compelled to act with urgency. If we leave the “solutions” up to them, then they will grow in power now backed by federal law. This can all very quickly bring us to full-fledged digital ID, which would again, change the nature of civil rights and the Internet, but also expands concerns about privacy and gives legal protections to Big Tech for things we once concerned privacy violations.

Well, it seems Meta has caught on and is ahead of the game.

In a press release dated May 5, 2026, along with a media blitz, Meta has disclosed their plans to use active user profiling to guess the age of their users and to leverage that AI-generated determination to control individual user access and use of the platform and its services. Meta isn’t the only one taking this alternative path. Discord has mentioned a very similar plan and discussion among tech providers of age-based restrictions is now hitting headlines daily.

Meta’s plan has been named “AI Age Assurance for Teens,” and the company is actually encouraging Congress to pass legislation like the GUARD Act and is asking parents to voice their support for it. In fact, Meta is working with a lobbying campaign called the Digital Childhood Alliance to this end. Given Meta’s predatory history and well documented harmful impact to minors, we should pause when Meta is excited. Here’s a link to their announcement: https://about.fb.com/news/2026/05/ai-age-assurance-teens/

Meta’s press release has this to say about encouraging legislation to mandate age-based verification for online resource access controls (i.e. Digital ID):

“While we’re investing heavily in our own age assurance technology, we know that no single company can solve this challenge alone. We believe legislation should require app stores do verify age and provide apps and developers with this information so that they can provide age-appropriate experiences, like Teen Accounts. Importantly, this approach is supported by 88% of US parents.”

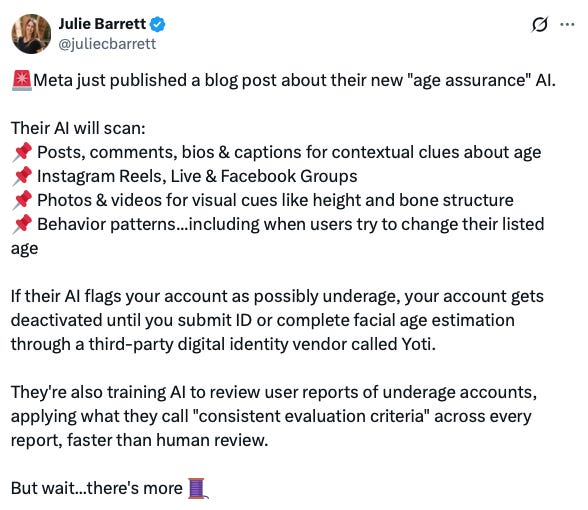

Julie Barrett, a digital health and parental responsibility advocate and founder of Conservative Ladies of America, summarized Meta’s approach in this way:

Part of the “more” that Barrett points out is the Meta-funded lobbying firm claiming to be childhood safety advocates who are lobbying parents and Congress, which would give Meta a public mandate (and legal protection) to do all this:

Meta’s announcement of this new practice starts this way:

We want young people to have safe, positive experiences online. That’s why we automatically place teens in default, age-appropriate experiences, like Teen Accounts. Today, we’re sharing updates on the age assurance technology we use to help ensure teens are in the right experiences for their age. This includes a deeper look at our ongoing work to strengthen underage enforcement, including the addition of AI visual analysis and other advancements; expanded protections for teens who we suspect misrepresent their age on Instagram in the EU and Brazil, and on Facebook in the US; and our ongoing efforts to help parents to talk to their teens about providing the correct age online.

Meta believes their services are only meant for people who are 13 years and older, and they explain their approach to enforcing this in the following ways:

“This includes using AI technology to analyze entire profiles for contextual clues —such as birthday celebrations or mentions of school grades — to determine if an account likely belongs to someone underage. We look for these signals across variousformats, like posts, comments, bios, and captions, and we’re continuing to expandthis technology across additional parts of our apps like Instagram Reels, InstagramLive, and Facebook Groups. If we determine an account may be underage, it will bedeactivated and the account holder will need to provide proof of age through our ageverification process to prevent their account from being deleted.

We’re also adding visual analysis as a new technique to aid our detection efforts. This technology allows our AI to scan photos and videos for visual clues about a person’s age that text might miss. We want to be clear: this is not facial recognition. Our AI looks at general themes and visual cues, for example height or bone structure, to estimate someone’s general age; it does not identify the specific person in the image.By combining these visual insights with our analysis of text and interactions, we can significantly increase the number of underage accounts we identify and remove.”

This all highlights several things we’d like to bring to your attention.

First of all, Meta’s approach here highlights the power and capabilities they already have. In fact we have documented this power in previous articles including our Data Monetization piece. Christian author Rod Dreher also writes of this capability and its power in his book Live Not by Lies, as does Jacob Siegel in his book The Information State.

Meta has vast capabilities that they have long used to automatically analyze you, profile your activity down to extreme details, and use that to curate an online experience specific to you. Not only do they use this to show you what they think you want to see, but they use this to show you what they want you to be aware of, thinking about, and believing. This capability existed long before we called it AI, and in fact it is the foundational technology that led to what is now called AI.

All of our online behavior is being constantly monitored. Bits and pieces of information are collected via your web browser, your ISP, the websites you visit, the services you use, even the apps you use. As we covered in our piece analyzing the security of Meta’s WhatsApp communications app, many technology companies even intentionally create loopholes in their privacy policies and product architectures so they can collect information that you would probably consider private and sensitive.

Data monetization is HUGE business in Big Tech. In fact, it’s the leading business model for revenue generation. It is what funds almost all of the “free” services we use from search engines to web browsers to apps in the App Stores. Many Big Tech companies intentionally collect more information about their customers than they need to, so they can sell that information (we call it meta data) to data brokers (Meta and Google being two of the largest). Data brokers then take all the data they have about all of the users of these various platforms and tools, and stitch that information together to create profiles from demographics about people groups, down to individual users. Some of these profiles are so specific that they describe people’s lives in details we are unaware of ourself.

In fact several years ago, Meta was exposed for creating “ghost” accounts for users who were not Meta customers. They were using purchased and collected information about people who did not yet use Facebook to prepare Facebook accounts for them. Then using partnerships with advertisers, they lured those people toward creating Facebook accounts, and as soon as they signed up those new customers found an experience that was pre-tailored to them, including lists of recommended friends.

Indeed, WhatsApp (part of Meta) was exposed for harvesting too much information from their users, by scanning the entire Contacts app on their user’s phone and uploading that information to WhatsApp. All the Contact information a WhatsApp customer had was collected and stored and used by Meta. This is one way that Meta can passively collect information and use it for behind-the-scenes profiling and product development. It is a major privacy concern.

Now, Meta is disclosing how they can use what has long been considered an inside secret and capability they haven’t wanted their users to be aware of, in order to meet government regulations. That means they now see the Federal Government as an ally to what I consider the intentional weaponization of their own platforms, and they are disclosing it in the name of public (child) safety.

Now, this is no surprise to those who have been paying attention to these issues. But again, this backend capability that is the basis of what makes the platform addictive and radicalizing in nature, which Meta has recently been penalized in court for, is essentially becoming mandated by the US Government, and Meta is all for it. They no longer have to hide. In fact, they can use this new mandate to expand what sorts of data they need to collect and analyze. They can use it to change their privacy policies in the name of collecting new and essential information to improve their age-validation models - all in support of complying with government regulations.

This not only unlocks the path forward to the functional equivalent of Digital ID, but it also unlocks new privacy concerns and a new precedent for legal protections of Big Tech for their data harvesting practices. Regarding Digital ID, all that the tech providers need to implement it, is our use of their platforms and tools. It’s now being built-in as the front door to accessing what they offer.

Regarding privacy, just think about Facebook scanning all your uploaded images and profiling the attributes of what they contain; who is in them, where those people are, what’s around them. Now think about that being used to identify who was where and with whom else. Let’s say you were “there” when an event of significance happened and uploaded a picture of yourself and those around you, and Meta or the government or law enforcement wants to find everyone “involved” based on their presence at the event. Meta already has that capability, but now it’s being protected by law and can easily be harvested either through unauthorized access or in cooperation with political entities to track people of interest, and potentially sanction their use of online resources. That’s the potential next step from where we are today.

Indeed, just a few years ago, Apple attempted to implement a capability within their iCloud service that would scan all uploaded photos from all users to attempt to identify signs of child abuse or the exploitation of children. That attempt was met by major public backlash over privacy concerns, namely the risk of misidentification of potential abuse and the risk of false legal accusations, but also because people want their content to be regarded as private and not used for purposes other than what they explicitly allow.

If Meta is an example, Big Tech doesn’t care about those concerns, and now has legal precedent to blatantly ignore them.

Key Takeaways

All of this serves as a great reminder of what you give up when you sign-up for and use free online services, like Meta and all their sub-products, including WhatsApp. But remember also, as we have covered previously, that there is an entire industry built around creating new “free” apps and services for the explicit purpose of collecting new information from an ever expanding number of people in different situations and stations of life. Indeed, the Band App, popular among schools and youth sports, was designed to reach that demographic and to harvest unique information from them.

When you use these services, you lose your privacy. Everything you post, comment on, share, upload, view etc. is known to them and is used by them to profile you and then create your unique product experience. All of this is done in service to their ends.

And all that you disclose through the use of these apps is used to generate revenue (you are the product) and to influence you through advertising and curated experiences. Far beyond luring you into buying new things online, this capability is actively being used to shape American culture and politics as well. It is the transformative nature of these platforms and “curated” experiences based on user profiling that has the radicalizing effect on all of us that Congress is so concerned about that they want to curtail (at least for minors).

Meta’s approach highlights the risk of poor legislation. The authors of the GUARD Act clearly meant for the legislation to restrict Big Tech and protect kids from radicalization, yet it is also providing them incentive, authority, and a mandate to grow in power and control.

This precedent will expand. Talk of digital ID and age-based access control restrictions in the name of public safety is spreading throughout the globe and through our online providers. It will expand, and the danger is that it will expand in terms of the definition of safety and who should have access to what based terms governed by politicians and as interpreted by Big Tech.

Our recommendations:

Be very careful about the apps and services you use online. Avoid “free” and avoid “public” but know that even what you share in private can also be used against you. What you do with your online resources discloses who you are, and that is rapidly becoming the basis by which you will be allowed to participate online.

Use the resources of Practive Security to learn about safe online practices and how to optimize your privacy and the control of your data. We have dedicated articles and presentations about secure communications, secure web browsing, privacy, encryption and more available to our Essentials Members on our website.

None of these so-called protections built by the platform providers will be sufficient for protecting our children. Moderating content is only part of the challenge and misses the risk of the transformative nature of the technology itself. Parents need to be directly involved in shepherding their kids in the use of online resources. We have more available in our parenting seminar, with free access to part 1 available here:

For Congress - see this as an example of how good intent rushed to action in the name of safety can be used against us by shrewd technology providers who are professionals at manipulation and deception.